WHAT'S NEWS

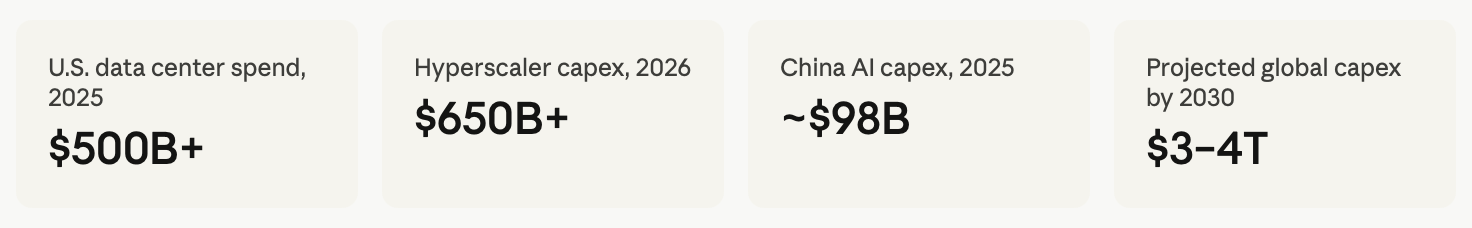

Tech giants including Microsoft, Amazon, Alphabet, and Meta plan to deploy over $650 billion in capital expenditures in 2026 alone to expand AI computing capacity, a figure that eclipses the annual GDP of most nations and suggests this is no ordinary technology cycle.

The Trump administration reversed a ban on Nvidia chip sales to China in mid-2025, with Commerce Secretary Howard Lutnick arguing the strategy was to get Chinese developers "addicted to the American technology stack" - a framing that drew furious pushback in Beijing and complicated years of bipartisan export control policy.

Chinese AI companies like DeepSeek openly acknowledge that chip restrictions are their primary constraint, requiring them to use 2–4 times more power to achieve results comparable to U.S. firms - yet that constraint has also spurred the kind of algorithmic efficiency breakthroughs that rattled Western markets in January 2025.

The last time the world witnessed a construction frenzy of this character, it was laying rail across a continent. Before that, it was stringing telegraph wire across oceans. Now, humanity is building something stranger and arguably more consequential: a planetary nervous system of silicon, fiber, and cooling towers, optimised for one purpose - training and running artificial intelligence at scale.

When Nvidia executives forecast that global data center capital expenditures will rise to $3–4 trillion by 2030, they are describing a transformation that rivals the entire industrialization cycles of the past century, a multi-fold increase from current spending levels that exceeds the annual GDP of most countries. These are not projections from promotional materials. They are backed by committed capital from the largest corporations in human history.

The question this raises - for investors, for policymakers, and for anyone with a stake in which country shapes the next fifty years of technological civilization - is not whether this infrastructure will be built. It will. The question is who builds it, who controls it, and on whose terms the world's AI future runs.

The Compute as Power Doctrine

To understand the AI infrastructure race, one must first understand the fundamental unit of the contest: compute. Not code, not data, not even talent - though all three matter. What separates frontier AI systems from everything that preceded them is raw computational power, measured in floating-point operations per second, delivered by specialised semiconductors operating inside climate-controlled buildings that consume as much electricity as mid-sized cities.

A single ChatGPT search consumes ten times the computing power of a traditional Google search, according to Goldman Sachs. Multiply that by billions of queries per day, multiply that by thousands of organisations building their own AI applications, and the infrastructure requirement becomes staggering.

U.S. data-center spending alone exceeded half a trillion dollars in 2025. The United States leads globally in terms of the AI infrastructure build-out and planned investments, followed by China, with other economies - notably Europe - lagging considerably.

The physical scale of this construction project defies easy analogy. Every fabrication plant requires thousands of skilled tradespeople - pipefitters, electricians, clean-room installers - to build controlled environments that meet atomic-level tolerances. GDP growth from AI-infrastructure construction is now visible across manufacturing, logistics, and energy sectors. Transformer lead times, once measured in months, now stretch past two years. Governments are offering subsidies that rival those once reserved for the automobile and aerospace industries.

The Magnificent Seven's $21.5 Trillion Bet

The financial architecture of this construction boom is essentially a collective wager by the world's most valuable companies that compute infrastructure is the most strategically important asset class of the 21st century.

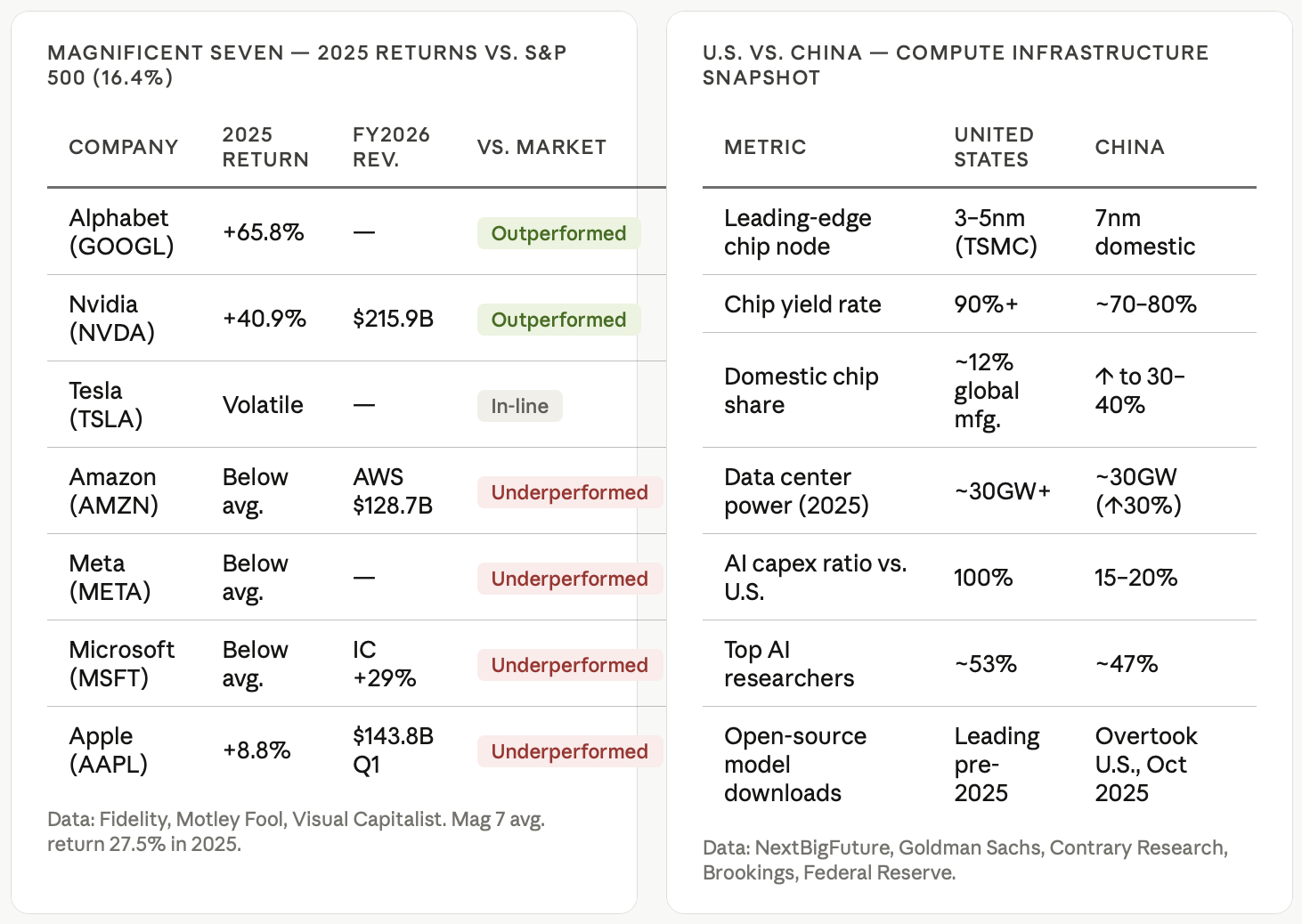

The combined market capitalisation of the Magnificent Seven: Alphabet, Apple, Amazon, Meta, Microsoft, Nvidia, and Tesla - stood at approximately $21.5 trillion as of November 2025, representing about 16% of the total value of all global stocks. The majority of that value rests, explicitly or implicitly, on an AI thesis.

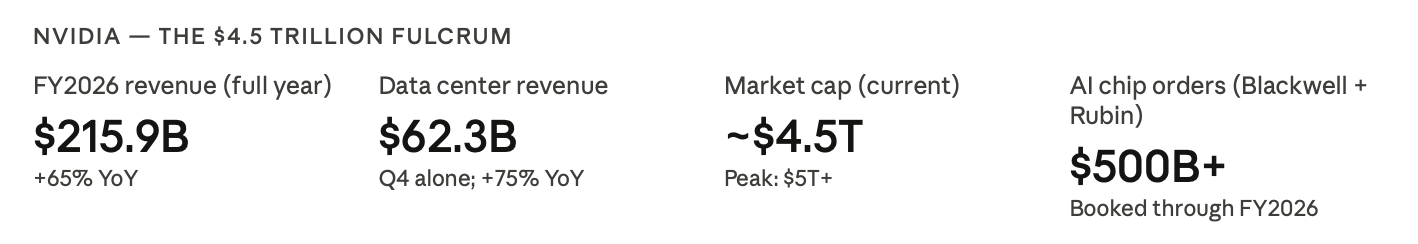

Nvidia posted fiscal 2026 revenue of $215.9 billion, up 65%, with data center revenue at $62.3 billion in the fourth quarter alone, up 75% year over year. Jensen Huang's company has become the defining enterprise of the era - not merely a chip supplier but the gatekeeper of the entire AI stack. Its CUDA programming framework, developed over two decades, creates an ecosystem lock-in that rivals Microsoft's grip on enterprise software in the 1990s. Nvidia currently holds an estimated 90% of data center AI market share - though that dominance is under pressure, as its biggest customers are developing their own chip infrastructure rather than remain indefinitely dependent on a single supplier.

The three-year bull market has been led by the tech giants, with Nvidia, Alphabet, Microsoft, and Apple alone accounting for more than a third of the S&P 500's gains since the run began in October 2022. But enthusiasm is cooling. Profits for the Magnificent Seven are expected to climb about 18% in 2026, the slowest pace since 2022; and not much better than the 13% rise projected for the other 493 companies in the index.

The market, in other words, is beginning to ask the question that every honest participant in this boom is quietly wrestling with: when does the infrastructure investment generate sufficient returns to justify its cost?

Goldman Sachs estimates capital expenditure on AI will hit $390 billion this year and increase by another 19% in 2026. The recipients of the lion's share of that money are roughly 10 AI companies that are interlocked with one another as customers and investors in an "increasingly circular" way - involving billions in equity stakes, revenue sharing, and vendor financing passed back and forth among them.

This circularity is worth scrutinizing. When OpenAI buys compute from Microsoft, and Microsoft invests in OpenAI, and Nvidia's revenues are partly sustained by both - the revenue numbers start to resemble a closed loop rather than evidence of genuine external economic value creation. History offers a cautionary parallel: the railroad boom of the 1840s produced extraordinary infrastructure, enormous fortunes, and catastrophic over-capitalisation, all simultaneously.

The Geopolitics of the GPU

No understanding of the AI infrastructure race is complete without confronting its most consequential dimension: it is, simultaneously, a strategic arms race between the United States and China.

The United States largely controls the supply of the most important chips, but China largely controls the supply of renewable energy products - a key means of quickly and sustainably powering them. This sets up an interesting dichotomy in the AI race.

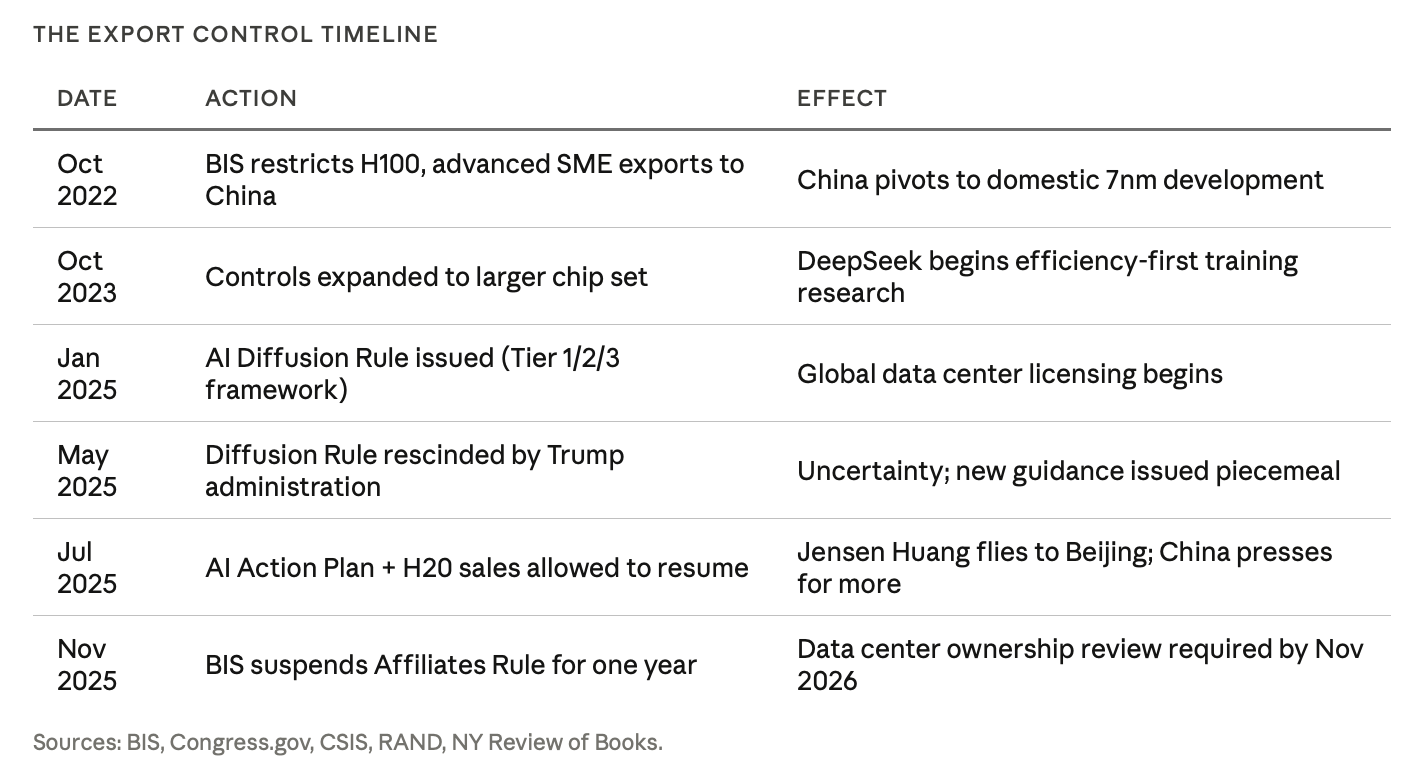

The U.S. strategy has been to weaponize its position at the top of the semiconductor supply chain. Beginning in October 2022, the United States issued major export control updates restricting China's access to high-performance chips and semiconductor manufacturing equipment. The stated goal was to retain control over so-called "chokepoint" technologies in the global semiconductor supply chain.

The policy has produced paradoxical results. Scarcity fosters innovation. As a direct result of U.S. controls on advanced chips, companies in China are creating new AI training approaches that use computing power very efficiently. Faced with relative computing scarcity, engineers at DeepSeek directed their energies toward new methods to train AI models efficiently - a process described in a technical paper posted to arXiv in late December 2024.

The technical specifics are significant. DeepSeek implemented a mixture-of-experts (MoE) architecture that, unlike traditional designs, selectively activates only the most relevant neural network components for specific tasks - enabling its R1 model to be competitive with OpenAI's o1 model while using significantly less compute. This is not merely a clever engineering trick. It is a fundamentally different philosophy of AI development - one born of necessity - and it has implications for the entire global industry's assumptions about compute scaling.

China's AI sector continues to struggle under the combined weight of U.S. export controls and internal shortfalls. Major Chinese AI firms, including DeepSeek, report struggling to improve their models due to a lack of advanced computing power. This shortage has forced Chinese firms into complex and costly solutions, including pursuing parallel computing - splitting a computational task into smaller pieces to run on multiple chips - and using more energy over a longer period.

Then came the diplomatic reversal. In mid-2025, President Trump reversed the ban on Nvidia chip sales to China. Nvidia CEO Jensen Huang flew to Beijing and announced, "We will start selling H20s to the Chinese market very soon." He praised cutting-edge AI models built by Chinese firms like DeepSeek and Alibaba. This reversal was remarkable because, as observers noted, it undercut the strategic logic of years of American policy toward China - policy designed precisely to prevent Beijing from accessing advanced American semiconductor technology.

Beijing was not grateful. Chinese commentators drew parallels to the Opium Wars. The Chinese government pressed Nvidia for more advanced chips. China raised security concerns about the H20 and effectively blacklisted another chip Nvidia had designed for China, while reportedly pressing to relax controls on HBM memory - a technology some experts see as a key chokepoint for China's ability to produce advanced chips including Huawei's Ascend series.

The episode encapsulates the central tension of the AI infrastructure race: the United States possesses structural technological advantages it is now actively uncertain whether to maintain.

China's Counter-Build

China is not standing still. In 2025, China's total AI capital expenditure reached up to $98 billion. The government was a leading driver of this investment, contributing an estimated $56 billion through various funds. China is spending $50–70 billion in annual subsidies for AI chips and data centers via the Big Fund III, and is focused on 7nm nodes for AI training and inference.

The results are beginning to show. Alibaba and China Telecom launched a data center in southern China powered by Alibaba's own Zhenwu semiconductors, designed for AI training and inferencing, capable of supporting AI models of hundreds of billions of parameters. These are among the largest models in existence, underscoring how China's biggest tech players are advancing their own AI semiconductor technology.

Electricity capacity for data centers in China is on course to jump 30% in 2025, to 30 gigawatts. Measured against the U.S., China's absolute AI capex remains comparatively modest, roughly 15–20% of what U.S. hyperscalers are expected to spend. But China's approach is more targeted. Chinese companies have taken a different approach: spending less and focusing their AI on industries they believe will drive revenue growth and return on their investments.

This is not unambiguously a weakness. The history of industrial competition suggests that focused, capital-disciplined challengers frequently outperform sprawling incumbents over long time horizons. China's domestic chip industry, moreover, is not stagnant. Cambricon Technologies reported first-half 2025 revenue of 2.88 billion yuan, a 4,347% year-over-year increase, using a domestic 7nm process and 2.5D chiplet packaging, with specifications reportedly matching the Nvidia H100 at around 40% lower costs.

There are also serious cautionary data points. China poured billions into AI infrastructure in what became a data center gold rush, largely driven from the top down, often with little regard for actual demand or technical feasibility. Many projects were led by executives and investors with limited expertise in AI infrastructure. The GPU rental price has dropped to an all-time low as a result.

This is the other lesson of every great infrastructure boom: the construction frenzy and the economic returns are not the same thing. Building the railroad does not guarantee that the railroad runs profitably.

The Power Problem: An Overlooked Chokepoint

Discussions of the AI infrastructure race frequently fixate on chips. They give insufficient attention to the resource that actually powers them.

Global data center power demand is expected to rise to a record 1,596 terawatt-hours by 2035 - a 255% increase from 2025 levels. The U.S. is set to remain the leader in energy consumption with a 144% surge in demand over this period, to 430 terawatt-hours. China's demand is projected to rise 255%, to 397 terawatt-hours.

China is currently ahead of the United States in generating and building out power infrastructure to support AI data centers - a phenomenon sometimes described by industry observers as an "electron gap." China's rapid, centralised expansion of electricity generation, including both massive renewable projects and traditional, dispatchable power, has created a significant capacity advantage.

China dominates the manufacturing of solar panels and the batteries needed to store solar power, giving it a renewable energy manufacturing advantage that the United States is unlikely to match in the near term. The United States possesses abundant natural gas, but gas turbine lead times from GE Vernova now stretch years into the future.

This creates a structural vulnerability in the American AI infrastructure build-out that chips and software alone cannot resolve. Compute requires electrons. Without a credible energy policy, the United States is building a high-performance engine without assurance of fuel.

The Ethics Block: Who Governs the Infrastructure of Intelligence?

There is an ethical dimension to the AI infrastructure race that market commentary tends to elide, treating it as a mere geopolitical and financial story. It is not.

The infrastructure being constructed is not merely a platform for commerce. It is the physical substrate of decision-making systems that will, over the coming decade, determine access to credit, medical diagnoses, legal outcomes, and military targeting. The question of who controls this infrastructure is therefore inseparable from questions of accountability, sovereignty, and democratic governance.

The U.S. AI Diffusion Framework - subsequently rescinded and replaced with provisional guidance - attempted to create a global licensing framework grouping countries into three tiers for access to advanced AI chips, with Tier 1 including the U.S. and 18 allied nations. The explicit goal was not merely commercial advantage but the creation of a "secure global ecosystem" for AI data centers.

The ambition is coherent. The execution has been erratic. The rescission of the Diffusion Rule in 2025 left allied nations uncertain about long-term supply commitments and handed Beijing a propaganda win about the reliability of American technology partnerships.

There is also the domestic governance question. The concentration of AI infrastructure ownership in seven corporations is, historically, extraordinary. No previous critical infrastructure - railroads, telephony, electrical grids - was ever constructed with this degree of corporate concentration at the outset. The regulatory frameworks that eventually governed those earlier infrastructures were built after the fact, often in response to abuses that proved difficult to reverse. The moment for structural thinking about AI infrastructure governance is now, not after the consolidation is complete.

This is not an argument against the build-out. The potential benefits of advanced AI in medicine, climate science, logistics, and education are genuinely profound. It is an argument for the proposition that infrastructure investment at this scale, with these geopolitical stakes, warrants public deliberation commensurate with its significance.

The Skeptical Optimist's Verdict

The AI infrastructure race is real, consequential, and in many respects admirable - a demonstration of the extraordinary capacity of market economies and industrial democracies to mobilize capital toward transformative ends. The primary beneficiaries include semiconductor manufacturers like Nvidia and AMD, hyperscale technology companies, data center REITs that own the physical facilities, and utility companies providing power. For patient investors with appropriate risk tolerance and sufficiently long time horizons, the case for exposure to these sectors remains compelling.

The skeptic's case is not that this infrastructure will not be built, or that it will not generate real economic value. It is that the current pace of capital deployment has outrun both the revenue models that would justify it and the governance frameworks that would make it legitimate.

The power loom transformed textile manufacturing and immiserated an entire generation of craftspeople before the economic gains were broadly distributed. The railroad boom produced magnificent infrastructure and catastrophic financial panics. The internet created the information economy and concentrated advertising revenue in two companies. Each of these transitions was, in retrospect, worth it. None of them were as clean as the investors who funded them anticipated.

The AI infrastructure wars will likely follow a similar trajectory: genuinely transformative, uneven in their benefits, poorly governed in their early phases, and - in the end - worth it. The sophisticated bet is not whether to participate. It is understanding which participants capture the value, and when.

In October 2025, China overtook the United States in monthly open-source AI model downloads, thanks to more customizable tools and licensing strategies that steer developers away from Western alternatives. The contest is not over. It has barely begun. And unlike previous technology races, this one has no clean finish line - only an expanding frontier of compute, power, and strategic consequence that will define the century.

The Wall Street Journal's technology coverage examines the intersection of innovation, capital, and geopolitical consequence. Data in this article is drawn from Goldman Sachs research, Federal Reserve economic notes, the Brookings Institution, RAND Corporation, Congressional Research Service, NextBigFuture, and SEC filings current as of April 2026.